Sign In

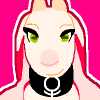

CloseTop: Early workstation-type system with rolltape monitor using large rollers, and teletype printout display modes.

Middle-left: “Portable” mecha-computer table with miniaturized rolltapes in its display system. Power supply via compressed gas or other power-carrying fluid; this seems like the most flexible power supply option.

Bottom-right: Plug-in power supply via a rotating drill-like shaft that plugs into a socket. Heavier and requires keeping the computer stationary, and more dangerous thanks to the rapidly spinning shaft, but maybe better for heavy duty power transfer?

Computer operation is, I assume, via gears/shafts system a la the K’NEX computer and various LEGO pure-mechanical circuits, potentially highly miniaturized using similar techniques for machining watch clockwork. Initial idea was taken from the Zuse Z1 computer, though I remain unclear on how it works exactly. Potential complications with mechanical systems: efficiency of energy transfer. How often are mechanical signal amplifiers needed to maintain a digital signal? How might this limit complexity and/or miniaturization of a mechanical circuit of given physical size?

Other systems using fluids and other media are possible but I am not sure how well these would scale to large computer systems. Gears and shafts seems most intuitively obvious to me.

Submission Information

- Views:

- 386

- Comments:

- 3

- Favorites:

- 2

- Rating:

- General

- Category:

- Visual / Sketch

Comments

-

-

Aye, probably. I don't foresee these reaching megahertz processor speeds, but do hope for a situation where text-based BBS style networking can arise. Potentially networking is carried out via hydraulics or pulled wire.

Yeah, modular design seems simplest/most scaleable and parallels electronic computers in a general sense. Cards and chips, sort of. Miniaturization seems doable using watch-style manufacturing techniques, limitation there is presumably fragility of gear teeth/assemblies versus power needed to drive a signal through the circuit. Such a series of computer sizes related to power supply sounds good, I'll keep it in mind.

I don't quite see how signal amplifiers increase processing time, although I suppose it makes sense in a way, as it introduces another interface between mechanical parts into the system. Maybe I'm missing something between mechanical and electric amplification (rather than force-multiplication, just to be 100% specific) systems, since I don't see that subtracting a lot of FLOPS/IPS if it does. Though I could see it being a problem in engineering higher-performance systems. In that case I assume mainframes may also have larger sturdier gears/axles than others.

Why organic lubricants in particular? I'm not criticizing, my knowledge just lacks here. I've heard good about lithium greases.

-

I was thinking about cascading amplifiers. One or two amplifier stages between modules doesn't add much latency. Imagine a huge mechanical computer, the size of a football field. There's got to be long data lines, to connect it's various parts. A single bus driver might have to drive 10 or 20 different input gears, scattered around the place. If one torque amplifier can't reach, you have to cascade them, and that can introduce latencies.

Also, clock skew. If you transfer data by a parallel bus, you have to be sure all 8 or 16 or 32 bits/gears have reached desired position at the same time (before sampling data). Even if only 1 bit/gear is late, decoder needs to wait for it.

Haha, there's also a very elegant solution to this! Different modules could work asynchronously to each other. Large computers could be built in super-scalar architecture, so that a chunk of data is processed by multiple logic cores at the same time. You can choose to make ALU using 4 pieces of 4-bit modules, or 8 pieces of 2-bit modules, depending on how much air/steam pressure and flow you have available. Large pressure, small flow, use 16-bit adders. Light pressure, big flow: use 1-bit or 2-bit adders.

Okay, windmill-powered analytical engines? Takes overclocking to a whole new level.

I meant something like lubricants extracted or harvested from living tissue. For example: out there exists a special kind of wild horse centipede whose leg joints excrete a lubricant far better than any created by distilling petroleum. Mercenaries go and hunt them down, extract the lubricant, and sell it to computing companies.

-

-

Link

kishniev

Using standardized modules would be the right way. Like: a 4-bit adder module, a program reader module, a decoder module, multiplexer, de-multiplexer, bus coupler, etc.

A computer is made up by connecting these modules together.

Direct compressed air sounds good for smaller computers. Bigger ones might need a lot of buffer tanks, maybe integrated steam-powered air compressors. To scale: calculators running on mechanical power, minicomputers on compressed air, and mainframes on pressurized steam.

Mechanical signal amplifiers would only increase latency, and thus limit the max computational speed. There's no limit on how many digital amplifiers you could place in a line. A too complex design could be a slower design.

I think there's going to be a lot of mechanical loss in lengthy drill-shafts. An organic super-lubricant might come in handy.